Who is This For?

Why AI researchers need to ask more "should we" questions along with their "can we" questions.

Hi there! Thanks for being here and welcome back to the newsletter! Today we’re looking at a paper that makes me go:

My last post focused on Perfect Corp.’s AI Personality Finder, a product marketed as being able to predict your personality along 5 dimensions — extraversion, agreeableness, openness, conscientiousness, and neuroticism — just by scanning your face.

My gut reaction then was: bullshit.

But I decided to give it the benefit of the doubt and dig into the science behind the marketing. I’m back to report my findings, and my new reaction is: bullshit!

How the AI “Works”

Perfect Corp. doesn’t release their particular methodology. But I was able to find a slew of recent AI papers on the topic of personality prediction from facial features.

The paper we’ll be looking at today is titled “Identifying Big Five personality traits based on facial behavior analysis,” and it represents pretty well what most papers in this research area do. Let’s start by taking the research at face value. The authors of this work gathered 82 participants: two-thirds male, one-third female, and mostly young, with an average age of 22 years.1

Each participant talked into a camera for 90 seconds, then filled out a personality self-assessment. The idea was to train an AI model that predicts a user’s personality scores (output) based on their video (input). Here’s a diagram of how their system works:

For non-visual learners, here’s the gist: the authors used pre-trained AI models to extract 70 tracking points key to facial expressions from every video frame. Nothing new there — facial keypoint extractors have been around for a long time and the authors of this work used an off-the-shelf model. For each keypoint they calculated four statistics — the average position of the point in the image, in both the left-right and up-down dimensions, and how much the point moved over time, in both the left-right and up-down dimensions. They corrected all of these measurements for movement of the whole head.

Based on these four statistics they trained a model to predict users’ personality scores.

How the Psychology “Works”

The last step, step 4 in the diagram, is where the novelty lies and the magic would happen if there were any magic to be found. This is where we have to turn to the psychology.

One of the most cited psychology papers I found was this one, from 2003, “Can you judge a book by its cover? Evidence of self–stranger agreement on personality at zero acquaintance.”

All you really need to understand from this paper is what this “self-stranger agreement” is. It’s an important idea because it’s the main tool psychologists use to test whether personality can be predicted from facial features.

Basically, each participant in such a study takes a personality quiz. Then each participant looks at photos of other participants and guesses their personalities. The studies measure how much the self-assessments align with the stranger assessments.

Holes in the Psychology

Feel free to skip this section if you’re short on time — you don’t have to read it to understand my main point. But I still think it’s crazy enough to mention.

Let me say here that I’m not a psychologist. But I am a researcher, and I can say this is a method rife with flaws. First of all, personality tests notoriously demonstrate a great deal of variance. And measuring self-stranger agreement only introduces more variance: you’re asking someone to make an unreliable prediction about a stranger’s unreliable assessment of themselves.

Second of all, personalities are shaped in part by how others treat you. And how others treat you is shaped by their assumptions about you. So in measuring how well strangers’ assumptions about a person align with their personality, you may just be measuring how much the cause aligns with the effect.

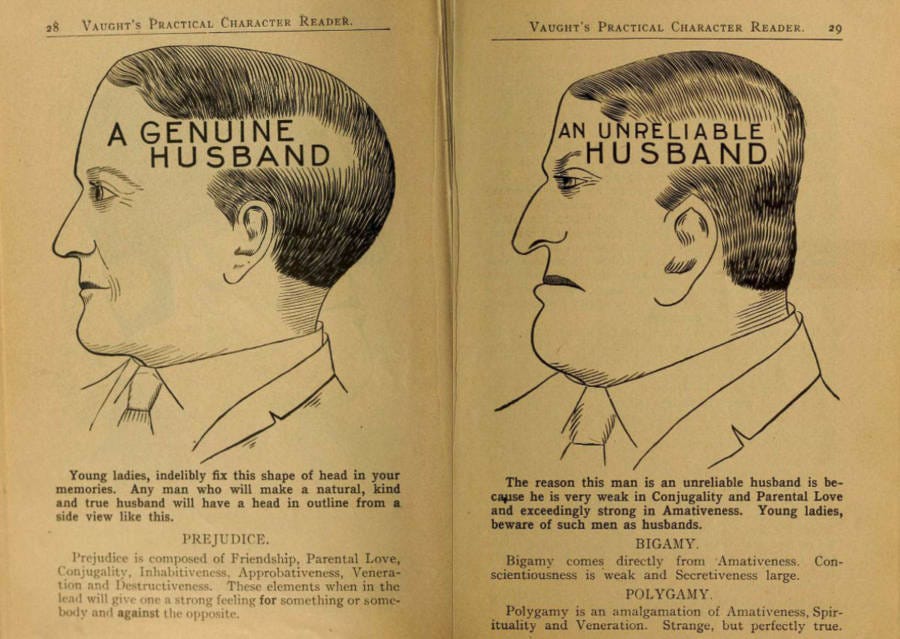

On a more tangential note, I was shocked to find the paper’s introduction citing phrenology as a basis for the work. Phrenology, for those unfamiliar, “is a pseudoscience that involves the measurement of bumps on the skull to predict mental traits,” to quote Wikipedia. It has a dark history of being used to support theories of racial hierarchies — in particular, the idea that different head shapes among different races prove that some groups are biologically predisposed to violence, submissiveness, or stupidity. Phrenology was prominently used to justify Nazi racial ideology, the idea being that head measurements could be used to separate Aryans from non-Aryans and explain the superior mental faculties of the former.

Remember that scene from “Django Unchained” in which Leonardo DiCaprio breaks open the skull of a Black man to demonstrate the three bumps on the back of the head that predestine servitude? That’s phrenology.

If the paper mentioned phrenology in passing, this digression would be a cheap shot. But the entire first paragraph is about phrenology, and it concludes that

although no scientific evidence was found to support the ideas of phrenology and physiognomy (Alley, 1988, Cohen, 1973) there does appear to be a strong lay belief that external features, in particular the face, do provide information about a person's character.

Which begs the question: if there’s no scientific evidence to back phrenology, why open a scientific paper talking about it? The next paragraph is no better: it cites surveys validating this lay belief to conclude things like

ninety percent of students believed that the face was a useful source of information about a person's personality.

Pollsters aside, we don’t validate scientific theories by asking a bunch of people, “Hey, does this seem right?” Before Copernicus dropped heliocentrism, there would have been a consensus that the Earth is the center of the universe. That doesn’t make it true, and it’s not how we conduct science.

What the Psychology Says

In the end, the authors of the “Can you judge a book by its cover?” paper find that

significant self–stranger agreement was found for psychoticism but not for extraversion or neuroticism

So, they find a correlation only for one of the three things they were trying to predict. And that correlation has a coefficient of r=0.355. For those who don’t know, a correlation coefficient measures the following: if you have a potential cause and effect, and you make a graph with the cause on the x-axis and the effect on the y-axis, you get a sort of cloud of scattered points. If you then draw a line through this cloud — but not just any line, the line that best fits the cloud — how closely does the data stick to this line? Intuitively, this tells you how linearly related the potential cause is to the effect.

For reference, here are a few sample datasets, each labeled with its correlation coefficient:

Generally, an r-value of 0.2 to 0.4 is considered a weak correlation. The paper in question reports a correlation of 0.355 as their best result. It’s not exactly a slam dunk.

How the psychology bottlenecks the AI

What this paper and other papers on the topic get wrong is that they appeal to some nonexistent magic in the math behind AI to discover a pattern (that humans can’t) for predicting personalities from faces.

I understand this temptation. There is something magic about hitting Run on a training session and watching the model discover patterns in the data. There is a part of me that thinks, “Hey, if AI can do this, why couldn’t it predict, say, personalities from faces?”

But the rule of thumb remains: garbage in, garbage out. The quality of AI is bottlenecked by the quality of its data. Even if an AI system works perfectly, reaching 100% accuracy, if the data is 60% wrong, the system will be 100% of 60%, i.e. 60%, wrong.

Likewise, if you’ll allow a very strange example, I could train a system to predict how many hats someone owns based on how many blueberries they eat. I could train this system perfectly, to reach 100% accuracy. Its predictions would still be meaningless because the data is meaningless.

The paper we’re investigating is blueberries and hats dressed up with a few bells and whistles. Nowhere is this more clear than in the conclusion of their abstract. It reads

through correlation analysis between facial features and personality scores, we found that the points from the right jawline to the chin contour showed a significant negative correlation with agreeableness.

In other words, by checking how snatched your right (not left!) profile is, these researchers can predict how nice you are. You don’t have to be an AI researcher to find that fishy.

All in all, this reads as phrenology with extra steps.

“Should we?” vs. “Can we?”

Why did the authors write this paper? They compile a wide range of potential applications in tourism, medicine, education, and finance, which include the prediction of depression and anxiety, leader performance, compulsive shopping, sales performance, and political attitudes.

The use case they choose to highlight is, I’m sure, their best attempt at identifying a socially beneficial application. Spoiler: it falls flat. Here goes anyway: they suggest that social media companies like TikTok can use “user-shared video data” to “identify… personality traits.” By automating this process, TikTok could “solve the problem that users are unwilling to self-report” such traits. Why might these automated results be useful, you might ask? Because

on the internet, adolescents are dominant, and their personality and mental health are closely related. Enterprises may provide better mental health services based on non-invasive personality identification.

In case you missed it (I doubt you did) let me run that back: By using AI on videos of teens scraped without their knowledge from social media, tech companies could predict personality traits that adolescents are expressly “unwilling to self-report.”

Frankly, it’s genius. It’s a “non-invasive” way to invade— sorry, circumvent, people’s explicit boundaries. But at least you’re only doing it to send them to therapy.

This is a pretty egregious example of a pattern I’ve noticed in AI: papers that reflect a complete failure, if not unwillingness, to ask basic “should we” questions instead of “can we” questions. Yes, as the paper suggests, this could hypothetically be used by social media platforms to identify teens disproportionately likely to suffer mental health problems. Putting aside that this is not really “non-invasive,” as the paper suggests three times, this once again begs the question: who asked for this?

You know what this is far more likely to get used for? The same kinds of questionable psychometric profiling tests that automatic hiring pipelines already employ. Or any of the other applications the paper names — predictions of depression and anxiety, leader performance, and political attitude — which are also, importantly, things I don’t want institutions to predict about me. If these predictions exist, they will be used. If they’re not used, they shouldn’t exist.

I’ve compiled this into a flowchart, which I call the “Should You Build It Diagram for AI.”

This is an important point. As AI researchers and academics, it’s easy to pretend our exalted position in the ivory tower lends us a priest-like academic license to live the life of the mind, free from worldly considerations. This is not the case: we don’t get to abdicate the responsibility to consider how our work could be used once released into the wild west of the Internet. Not just how we would use it and not just how it’s intended to be used. But how it could be used.

The Verdict

My feeling on this work is that the psychology is flimsy, the AI is even flimsier, and the potential use cases are distasteful. The niche potential upsides to this research are outweighed by the glaring negative externalities.

It’s a good reminder that AI researchers have to earnestly ask “should we” questions rather than retroactively searching for palatable use cases to justify preexisting “can we” questions of academic interest.

So, in closing: who is this paper for? No one.

The only stipulation the authors cited was that their participants had to be

disease-free (free from any mental illness identified by a psychiatrist, no disease affecting the face and facial expressions, and in good health)

I’m not here to pick a semantic battle, but… Yeesh. The delivery could use some work.